Tapping the Potential: Secure Chunk-based Deduplication of Encrypted Data for Cloud Backup

| Venue | Category |

|---|---|

| IEEE CNS'18 | Secure Deduplication |

Tapping the Potential: Secure Chunk-based Deduplication of Encrypted Data for Cloud Backup1. SummaryMotivation of this paperTapping the Potential Implementation and Evaluation2. Strength (Contributions of the paper)3. Weakness (Limitations of the paper)4. Future Works

1. Summary

Motivation of this paper

Existing the secure deduplication designs are at odds with the real-world dedupe requirements in terms of security and performance. (pay little attention to the challenges and practical requirements of chunk-based deduplication (CBD))

The practical requirements:

- Low-entropy chunk: brute-force attack for predictable files. CBD will amplify the attack efficacy due to the potentially much lower entropy contained in a small chunk.

Question: how to reduce the risk of the information leakage with minimal impact on the underlying deduplication routine?

- Increased system operation overhead: incurs higher latency and computation overhead, since the client needs to run the key generation protocol with other online parties (a key server or peer clients)

Question: can it speed up the key generation while still ensuring an effective deduplication function?

- Practical dedupe performance: in addition to the deduplication ratio, it also needs to concern chunk fragmentation level, or restore speed. A high level fragmentation level typically adversely affects the system read performance and further increase the data restore cost.

Question: any secure chunk-level dedupe design should provide a read performance on par with plaintext CBD practice.

This paper intends to solve those three challenges.

Tapping the Potential

- Main idea: using randomized encryption will completely protect the low-entropy chunks

will outright incapacitate the deduplication, this is the desired asymmetry between security and performance, but only with minimal dedupe performance loss.

- System Model This paper focuses on protecting the confidentiality of predictable data

It assumes it can achieve the semantic security for unpredictable data with CE.

All the communication channels between clients, the key server, and the backup storage system are secure.

A ideal functionality :

- input - client: chunk , key server: a chosen secret

- output - client: the chunk key , key server: a sign, storage server: the ciphertext of chunk

Goal: a probabilistic polynomial-time (PPT) adversary cannot distinguish the real-world execution of the proposed scheme.

- Randomized Oblivious Key Generation (ROKG) Chunk encryption key can be generated by running a secure (oblivious) two-party computation between the client and the key server.

the key server learns nothing on the client's input and algorithm output client cannot infer key server's secret

Some points:

- a chunk obfuscation algorithm takes as input the chunk data , a random number , then outputs the obfuscated chunk data .

- The modified version of blind RSA signature

- In the system setup phase, the key server would generate the pairs of RSA sets, for each user, it would choose only one from the this set and blind this with the user's ID.

The key design is it uses pairs of RSA, so that given any compromised client out of clients in the system

the adversary can only infer at most client's data on storage servers. ()

It can tweak the parameter to accommodate the real network scale.

the number of compromised machines depends on company's size but also

- Impact on deduplication Model: the store data:

: the size of data that cannot be deduplicated across all the users : the size of data that have been deduplicated degradation ratio:

This can proves that when outsizes significantly, selecting a small or moderate will not introduce an obvious performance penalty.

the workload is deduplication-unfriendly.

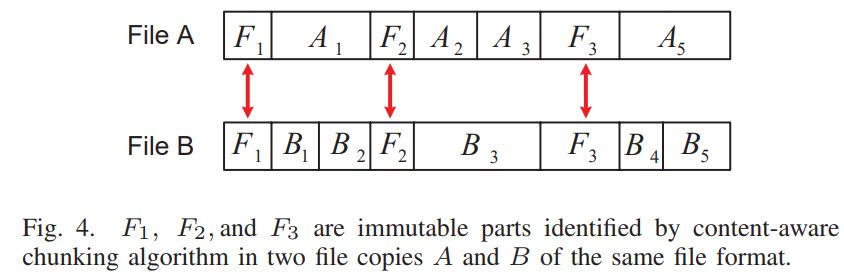

- Efficiency improvment for frequent insensitive data In file level, it extracts the immutable parts and utilize them as a file fingerprint

accelerate the key generation

- Slowing down online brute-force attack

- per-backup rate-limiting policy: Given the projected backup data size and expected chunk size, it can get the budget to bound the number of requests that are allowed to be processed by key server.

- the request can be processed during a prescribed time window.

- Improving Data Restore Speed This proposed scheme will naturally enable better read performance for a user

allow a duplicate chunk copy under one secret to be kept in the storage without referring it to an existing copy under another secret in an old container.

Further improve the read performance: reconstruction-aware chunk placement mechanism

enforce a spatial locality for chunks chunks under the same key server secret are stored close to each other.

a slight loss of deduplication so as to achieve the desired security objectives, the chunk fragmentation level for user backup is also reduced.

Implementation and Evaluation

- Evaluation Dataset: FSL MacOS Snapshot-(variable-sized chunking with average chunk size 8KB) Size: the total size before deduplication is 463TB

TCP-based randomized oblivious key generation protocol: Python Deduplication simulator: C Restore simulator: C

2. Strength (Contributions of the paper)

- this work proposes a secure deduplication considering the case for multi-users scenario. Its idea is very simple but effective. Besides the gain in security, but also for the aspect of better read performance.

3. Weakness (Limitations of the paper)

- For each chunk, this scheme needs to generate a separate key which incurs a high overhead. (although its file-level key scheme can mitigate this impact)

4. Future Works

- The key issue of this paper is how to guarantee the security of low-entropy chunks. And it argues other methods by saying that they do not consider the protection of low-entropy chunks and practical dedupe performance.

- One insight from this paper is in its threat model: it considers the case of multi-client compromise resilience, which is always be ignored in many papers.